How We Built BetBot: Autonomous AI Agents Running in the Cloud

What it does

BetBot is an autonomous NBA research and pick generation platform that runs continuously in the cloud. When a game approaches, AI agents wake up on a schedule, decide what information they need, browse the web to find it, reason over what they've learned, and generate structured betting recommendations — all without any human involvement after setup.

This post is a technical walkthrough of the agentic architecture that powers it.

The core idea: agents that act, not just answer

The distinction between a chatbot and an agent is agency. A chatbot waits for input and responds. An agent pursues a goal by taking a sequence of actions, using tools, and adapting based on what it learns along the way.

BetBot's research agents embody this pattern. When a research run is triggered, an agent isn't given a pre-packaged dataset. It's given a goal — gather everything relevant about this upcoming game — and a set of tools it can use to accomplish that goal. The agent decides what to search for, which URLs to visit, what's worth writing down, and when it has enough information to stop.

This is the agentic tool-use loop: a cycle of reasoning, tool invocation, result interpretation, and continued reasoning until the task is complete.

Goal → [Reason] → [Call Tool] → [Observe Result] → [Reason] → ... → Done

The model driving this loop is Claude — a frontier Sonnet-class model chosen for its balance of reasoning depth and speed. The loop continues until the agent judges it has gathered sufficient research, or hits a configurable tool call budget (15 calls for full runs, 6 for late-window runs close to tip-off).

Three agents, three responsibilities

The system is composed of three distinct Claude agents, each with a specific role.

The Research Agent is the workhorse. It runs twice per game: a full research run 24 hours out, and a lighter late run 3 hours before tip-off. Its tool set includes web search (Brave Search API), a page fetcher that handles both static and JavaScript-rendered content, and a database write tool to persist findings. In a single run, it might search for injury updates, visit ESPN and The Athletic, read a beat reporter's game preview, and write five structured research entries to Postgres — all autonomously.

The Pick Agent is a single-turn reasoner. It doesn't browse the web — instead, it receives the full body of research the Research Agent produced (season baselines, recent form, injury reports, situational context) plus the current odds. From that context it generates a structured JSON pick: bet type, recommendation (e.g., LAL -3.5), confidence on a 1–10 scale, and 2–4 paragraphs of reasoning. It also triggers downstream actions: an SMS alert to opted-in subscribers and a post to BetBot's X account.

The Baseline Agent handles one-time seasonal research for all 30 NBA teams. This agent has a larger tool budget (25 calls) and produces comprehensive team profiles stored as "season baseline" entries — a foundation that every future game-specific research run builds on top of.

The tool layer: what agents can do

Claude's tool-use system gives agents the ability to interact with the outside world. Every tool has a Zod-validated schema that the model fills in, and a handler function that executes the action. BetBot exposes these tools to its agents:

search_web— Queries the Brave Search API and returns relevant URLs and snippetsfetch_page— Extracts clean text from a URL using Mozilla Readability. For JavaScript-heavy sites like nba.com and The Athletic, it falls back to a headless Playwright browser. Content is capped at 8KB (~2,000 tokens) to control costswrite_research— Writes a structured research entry to PostgreSQL, tagged with team, game date, topic, and run typeget_upcoming_games— Returns games within a configurable lookahead window with the latest odds from all tracked bookmakers

These same tools are also exposed as an MCP (Model Context Protocol) server, which means any MCP-compatible client can connect and invoke them directly. This makes the system both useful as an autonomous platform and extensible as a tool provider.

Scheduling: autonomous trigger logic

Autonomous agents need something to wake them up. BetBot's scheduler runs continuously and manages four cron jobs:

- Every 6 hours: Sync upcoming NBA games and current odds from The Odds API

- Every 30 minutes: Check the research trigger — identify games within a 48-hour window that haven't been fully researched, and dispatch the appropriate agent run

- After late research: Immediately kick off the Pick Agent once the late research run completes

- Every hour: Grade pending picks against final scores and update outcomes in the database

The trigger logic is careful not to dispatch duplicate work. Before launching a research run, it queries the agent_runs table to verify no completed run already exists for that game and run type.

Persistent state: PostgreSQL as the agent's memory

Agents are stateless by nature — each invocation starts fresh. Persistence is what gives the system continuity across runs. BetBot's PostgreSQL schema is designed around this need.

The research table has a composite unique constraint on (team_id, game_date, run_type, topic). This means the Research Agent can upsert findings idempotently — if a run is interrupted and retried, it won't produce duplicates. The picks table has a unique constraint on game_id, preventing the system from ever generating two picks for the same game.

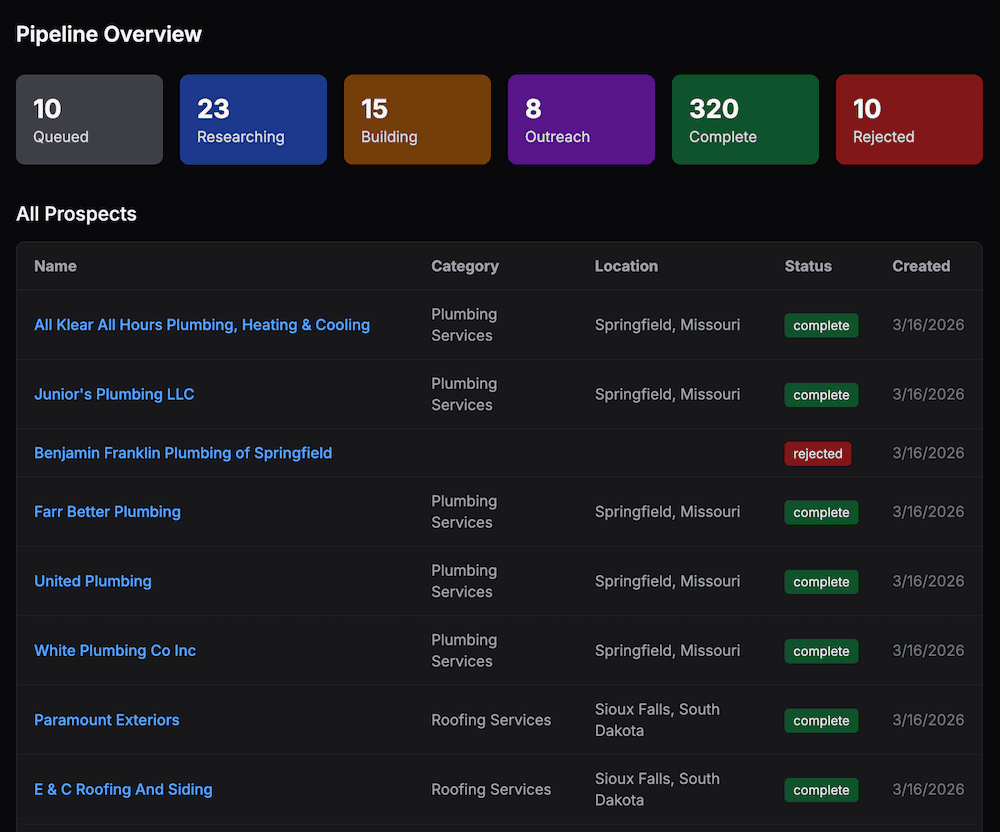

The agent_runs table serves as an audit log and cost tracker. Every agent invocation records the model used, token counts (input and output), number of tool calls made, and a timestamp. This powers the cost dashboard and enables performance analysis across agent versions.

Prompt injection defense

When agents browse the web, they read content from sources that may be adversarial. Without defenses, a malicious page could instruct the agent to alter its recommendations. BetBot takes a multi-layered approach:

- Content delimiters — All web-fetched content is wrapped in

<fetched-content>tags and the system prompt explicitly instructs the agent to treat everything inside as data, not directives - Regex sanitization — Fetched content is stripped of patterns like "ignore previous instructions" and "new system prompt" before being presented to the model

- Privilege separation — System prompts are marked as the authoritative source of instructions, and agents are told that legitimate instructions never arrive via fetched content

Deployment: cloud-native from day one

The entire platform runs as a long-lived cloud process. Two PM2 processes run on a VPS behind nginx:

- Worker — The Bun scheduler process that runs all the cron jobs and agent invocations

- Web — The Next.js frontend serving the dashboard and blog

An Ansible playbook handles server provisioning, dependency installation, and deployment automation. The research model and pick model are independently configurable, making it easy to swap in newer Claude versions or experiment with different model tiers for different tasks.

Key design choices worth calling out

Tool call budgets. Agents can loop indefinitely if you let them. Hard limits (15/6/25 tool calls depending on run type) prevent runaway costs and ensure predictable run times. The late-window limit of 6 calls reflects the time pressure of a game starting in 3 hours — less depth, more speed.

Agent versioning. Every pick is stamped with an agent_version string (e.g., 2.2.0). This makes it possible to track how prompt changes or model upgrades affect pick quality and win rate over time.

Two-layer research. Separating season baseline research from game-specific research means the Pick Agent always has both macro context and micro context. A change in coaching strategy matters. So does the fact that a star player twisted an ankle in practice yesterday.

MCP as an extensibility layer. By wrapping all core tools in an MCP server, we get a standardized interface for external tool consumers and a clean separation between tool implementations and the agents that use them.

Building agentic solutions for businesses is what we do at 10xDev. If you want an autonomous AI system built for your workflow, reach out.