BetBot

Custom AI Agent Development

- Claude AI (Agentic Tool-Use Loop)

- Playwright Browser Automation

- PostgreSQL Persistent State

- Prompt Injection Defense

- Ansible Automated Deployment

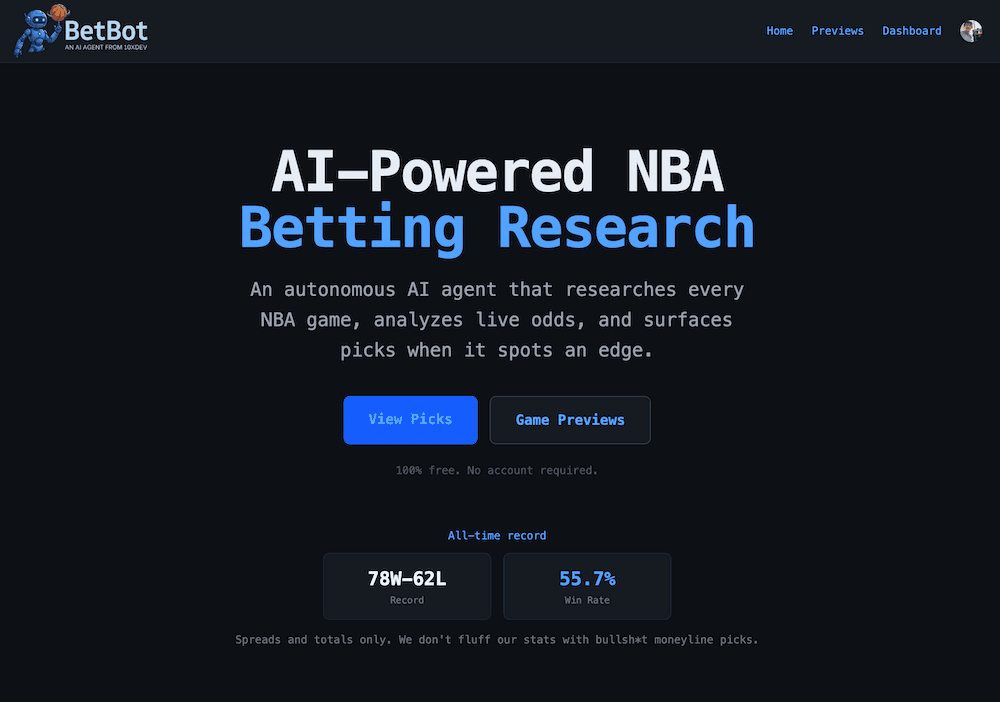

BetBot uses AI agents to provide NBA betting research.

BetBot is an autonomous NBA research and pick generation platform that runs continuously in the cloud. When a game approaches, AI agents wake up on a schedule, decide what information they need, browse the web to find it, reason over what they've learned, and generate structured betting recommendations — all without any human involvement after setup.

Three Agents, Three Responsibilities

The system is composed of three distinct Claude AI agents, each with a specific role. The Research Agent is the workhorse — it runs twice per game: a full research run 24 hours out, and a lighter late run 3 hours before tip-off. It autonomously searches for injury updates, reads beat reporter notebooks, visits ESPN and The Athletic, and writes structured research entries to PostgreSQL. The Pick Agent receives the full body of research plus current odds and generates a structured JSON pick with bet type, recommendation, confidence score, and detailed reasoning. It also triggers downstream actions: SMS alerts to subscribers and posts to BetBot's X account. The Baseline Agent handles one-time seasonal research for all 30 NBA teams, producing comprehensive team profiles that every future game-specific research run builds on top of.

Agentic Tool-Use Architecture

BetBot uses an agentic tool-use loop — a cycle of reasoning, tool invocation, result interpretation, and continued reasoning until the task is complete. The Research Agent isn't handed a pre-packaged dataset. It's given a goal and a set of tools — web search via the Brave Search API, a page fetcher that handles both static and JavaScript-rendered content (using Playwright for sites like nba.com and The Athletic), and a database writer to persist findings. The agent decides what to search for, which URLs to visit, what's worth writing down, and when it has enough information to stop.

Persistent Memory & Two-Layer Research

Agents are stateless by nature — persistence is what gives the system continuity across runs. BetBot's PostgreSQL schema is built around two distinct layers of research. Season Baselines are deep-context profiles for every team covering coaching philosophy, roster strengths, scheduling patterns, and situational tendencies. Game-Specific Research captures time-sensitive analysis tied to a specific matchup: injury availability, current form, rest advantages, and motivational context. The Pick Agent receives both layers simultaneously before generating a recommendation — the same combination that sharp bettors spend hours assembling manually.

Autonomous Scheduling

The scheduler runs continuously and manages four cron jobs: syncing upcoming NBA games and odds every 6 hours, checking the research trigger every 30 minutes to dispatch agent runs for games within a 48-hour window, immediately kicking off the Pick Agent after late research completes, and grading pending picks against final scores every hour. The trigger logic prevents duplicate work by querying the agent_runs table before launching any research run.

Prompt Injection Defense

When agents browse the web, they read content from sources that may be adversarial. BetBot takes a multi-layered approach: all web-fetched content is wrapped in delimiter tags with explicit instructions to treat it as data, regex sanitization strips known injection patterns, and privilege separation ensures system prompts are the sole authoritative source of instructions.

MCP Server & Extensibility

All core tools are also exposed as an MCP (Model Context Protocol) server, providing a standardized interface for external tool consumers. This makes BetBot both useful as an autonomous platform and extensible as a tool provider for any MCP-compatible client.

Full Transparency

The dashboard shows every pick ever made with outcomes, the full reasoning behind each recommendation, confidence scores, token usage, and estimated cost per run. Every pick is stored with a timestamp, graded automatically after games complete, and stamped with an agent version string to track how prompt changes or model upgrades affect pick quality over time.